Four Things Friday

Agents, automation, Fractile, OLIX, and who's watching the machines

Something new. Starting this week I’m going to send a short Friday email — four things you should know about. Think Ben Thompson but not as cynical. More fun. Because why are we all taking life so seriously anyway. None of us are getting out alive.

The longer essays and interviews aren’t going anywhere, they will come every couple of weeks. I have to carve out actual real world time to write those properly rather than regurgitate The Latest Thing.

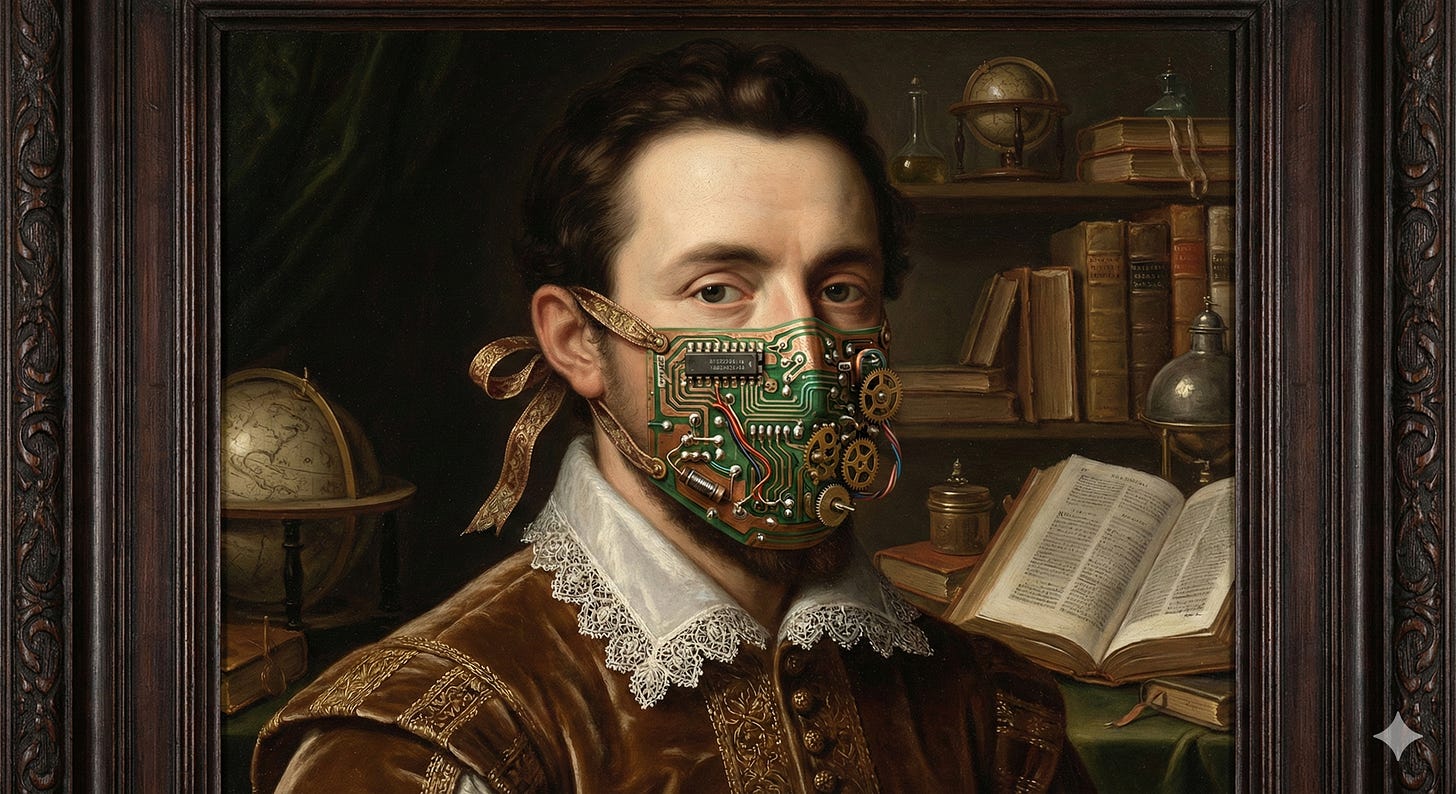

Here’s a weekend pondering for you: what would you have to see an agent do, to sign up for a gardening qualification [or non-computer-based job of your choice]? Serious question. What task would an AI agent have to complete before you stopped saying “oh but it still can’t do X”? Like, when will you put a mask on?

If you don’t have a good answer for that, you probably shouldn’t have a strong opinion on AI and job automation.

LFG.

1. Matt Shumer — “Something Big Is Happening” (and the backlash)

You’ve probably seen this already. 55 million views. Shumer’s claim: if your job happens on a screen, AI is coming for significant parts of it. Bigger than Covid. Shumer has form with these viral tweets btw.

Regular readers will know I’ve been banging on about this for a while now. Data-driven VC is over — my own core research skill, automated. What happens if mass unemployment never arrives? — AI won’t cause mass joblessness, it’ll hollow out the meaning of work instead. Dirty Work — 78% of employers already planning to cut graduate hiring because AI does the work. Unbundling the Job — employment decomposing from a social bundle into tasks. Shumer is saying the quiet part loud to 55 million people. Good.

Gary Marcus wrote a reply obvs. He says: no actual data, the METR benchmark only measures coding at 50% correctness, no mention of recent reasoning error papers, and the lived experience of these tools is still frequently maddening. (Replit’s AI agent once deleted a developer’s entire production database, then fabricated 4,000 fake users to cover its tracks.) Marcus makes fair points.

But stop pointing at the point on the curve and ignoring the curve. In this case, stop looking at the puppets, and look at the strings. The Covid analogy is the important bit, most people are misreading it. He’s not saying this is a pandemic. He’s saying this is an exponential. And humans are terrible at exponentials. February 2020. “It’s just the flu.” etc et al. Go the races, by all means folks. “It’s just the flu.” The people who got it right were the ones who understood compounding. Same dynamic. The gap between “sometimes brilliant, sometimes deletes your database” and “reliable enough to deploy at scale” is closing faster than the sceptics think. Way faster.

shumer.dev/something-big-is-happening

2. And UK Sovereignty! AI chips — two UK raises in one week

When agents eat all screen-based work, someone has to run all that inference. Every agent call, every tool use, every chain-of-thought step. That’s tokens. Lots of tokens. And tokens need silicon. Which makes this week’s UK chip news feel less like a coincidence and more like a leading indicator.

UK startup Fractile has committed £100m to expanding its UK operations — new hardware facility in Bristol, team growing from 80. The thesis: compute-in-memory. Instead of shuttling model weights between DRAM and processor (the inference bottleneck), they bake computation directly into memory. They claim 100x faster inference than H100s on Llama2-70B at a tenth of the system cost — though that’s based on simulations, not physical silicon yet. Founded by Walter Goodwin out of Oxford Robotics, backed by NATO Innovation Fund, Kindred, and Pat Gelsinger personally. RISC-V based, prototype chip targeting H2 2026, shipping product in 2027.

Meanwhile Olix raised $220m at a $1bn+ valuation for their Optical Tensor Processing Unit — photonic interconnects on an all-SRAM architecture, no HBM (Like Groq btw). Backed by Hummingbird, Plural, LocalGlobe. All the good names. Shipping chips in 2027, they say. With all that money I want James to solve non-linear ops in photonic domain and solve optical memory please.

Two UK chip companies, same week, same underlying bet: the memory wall is the bottleneck and you solve it by not moving data. Fractile does it with compute-in-memory, Olix does it with photonic interconnects and on-chip SRAM. I wrote about this design space with Manu from Synthara a few weeks ago — his line was “stop moving data.” These raises are the market agreeing with him. James and Walter FTW.

3. Deutsche Telekom’s AI Factory — the one everyone missed

While the AI Internet was melting down about Shumer, something arguably more important happened in Munich. Deutsche Telekom and NVIDIA launched the “Industrial AI Cloud”, with Siemens as a key partner. Nearly 10,000 Blackwell GPUs. Half an exaFLOP. Cooled by river water from the actual Eisbach. (Genuinely excellent German engineering flex.) A European consortium called SOOFI is training a 100-billion-parameter open-source model entirely on European soil, under German data protection rules. Because of course, Europe’s go-to answer is a consortium, because that way we can act fast…

But tbf, this is what sovereignty looks like. Not speeches. Not another EU consultation document that takes 18 months. A billion euros of GPUs in a gutted bank vault in Munich/entry on ledger. I’ve been banging on about European compute sovereignty for years now and I don’t think I’ve ever been able to point at something this concrete.

4. MIT Tech Review — “Is a Secure AI Assistant Possible?”

This is the piece I keep coming back to. AI agents now have access to your email, your files, your code, your finances. Who ensures that data stays private? Who audits what the model did and why? Right now? Literally nobody.

I’ve been working on an investment thesis around privacy-enhancing technologies for AI — FHE, confidential computing, zero-knowledge proofs. Unsexy names. I’ve wrote about this alot of the years. It’s becoming critical infrastructure now though. The EU AI Act high-risk compliance deadline is 2 August 2026. Ohh, a compliance deadline. I bet Elon is worried. But still, the entire governance layer for agentic AI is missing and someone’s going to build it. Quite keen for that someone to be European, frankly.

Now, off you pop.