Compute Is Defence Now

State of the Future: Dispatch from 14th May 2026

You will get these on Thursday’s now. Thursday is the new Friday. Friday’s are the new Saturdays. Claude Code has increased productivity so much, the 4 day work week is real. It’s all very Economic Possibilities for our Grandchildren. Technology means more leisure time, amiright?

No obvs not, just more calls, more memos, more work. Busier than ever. Keynes's had it all wrong. Apart from his taste in art.

There’s a fashionable take in some circles that LLMs are a dead-end (often some parts of Government), they are too power-hungry, shit unit economics, diminishing returns on scale. And that the inevitable consequence is a great unwinding of datacentre capex as workloads migrate to the edge. I think the story continues: the “EdgeAI moment we’ve been promised for five years” finally arrives. 95% datacentre / 5% edge becomes 80/20, then 70/30, or whatever number lets you justify shorting Nvidia. Core versus Edge. Pick a winner.

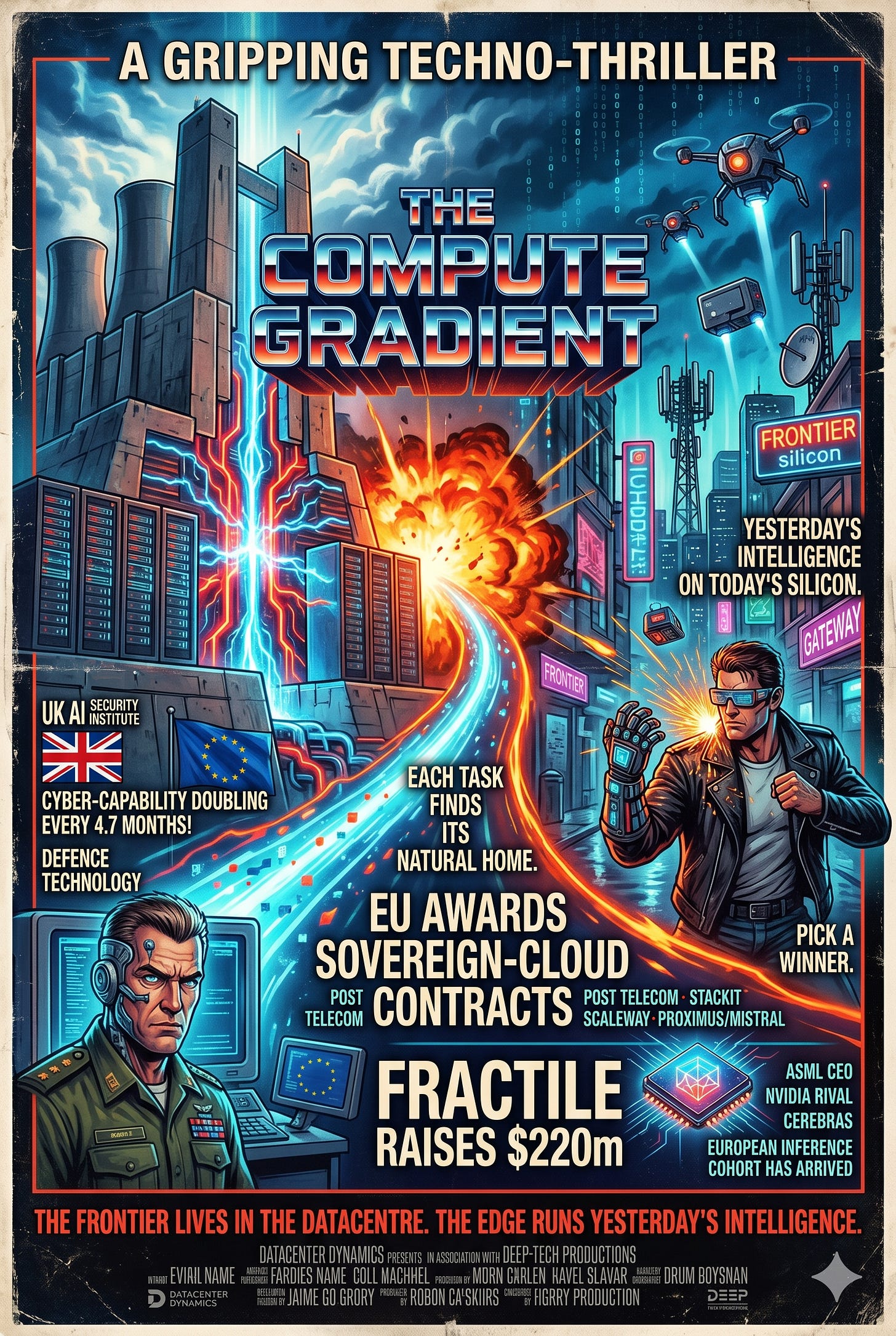

A while ago Jonno and I argued the framing itself is wrong in The Compute Gradient:

“The opportunity is to stop treating this as a zero-sum race for megawatts and instead to shape the stack so each task finds its natural home.”

There is a real opportunity in new edge silicon: low-latency, low-power designs for inference at the device, the gateway, or the cell tower. That part of the bull case is right, and we’re actively looking at it. But the conclusion people draw, that we can wave off datacentre capex and pile into edge instead, doesn’t follow.

The fact is and will remain: the frontier lives in the datacentre, and increasingly it doesn’t come out. So frontier capabilities in science, ai research, and defence, will be in the datacentre.

1. AISI Says Cyber Capability Is Doubling Every 4.7 Months And Compute Just Became A Defence Technology

The UK AI Security Institute published on 13 May that autonomous AI cyber-task capability is now doubling every 4.7 months. That’s not really even a timeframe. In November 2025 they had it at 8 months. Then Claude Mythos Preview and GPT-5.5 came in over the 4.7-month line, AISI’s own framing is that it’s unclear whether this is a one-off step-change or a new, faster trend. Either way, the slope just got steeper. It’s accelerating!

Numbers. Claude Sonnet 4.5 succeeds 80% of the time on cyber tasks that take a human expert 16 minutes. The newer Mythos Preview checkpoint solved AISI’s hardest range (”Cooling Tower”) 3 times out of 10, the first model to complete it at all. AISI: “the length of cyber tasks that frontier models can complete autonomously has doubled on the order of months, not years.”

This is a UK government body. Its readers are MoD, NCSC and the Cabinet Office. We really need to internalise this and not hand-wave it away with LLMs are uneconomical and won’t reach AGI. The implication is that datacentres hosting frontier models are defence infrastructure now, full stop. Anton Leicht’s Cut Off this week (h/t Jack Wiseman) makes the policy version clear: frontier AI access will be rationed through some combination of security gating, compute scarcity, and US government leverage, and non-US allies should be treating datacentre investment as the price of staying at the top table. I will state it more clearly. datacentres are security and defence infrastructure. Yes it will use gas and all the water in the world. That is going to be the cost of staying at the top table.

This is what changes the edge-vs-core analysis. The strongest models will increasingly not be exposed via open API, they’ll be served through restricted, jurisdiction-controlled channels to vetted customers, or held back entirely. Edge silicon, by construction, runs the previous generation: the model small enough to fit in your power and memory envelope, the model someone was willing to release the weights for. Genuinely useful for a large class of workloads. Maybe real-time robots and drones. But not useful if the question is whether your country can do AI-augmented cyber defence, intelligence analysis, or any of the strategic decisions that need frontier capability.

A country or company that opts out of datacentre capex on edge-economics grounds is opting out of the frontier. The edge runs yesterday’s intelligence on today’s silicon. The datacentre runs tomorrow’s intelligence.

So if frontier datacentres are defence infrastructure, who in Europe is actually building them under terms Europe controls?

2. The EU Just Awarded Sovereign-Cloud Contracts To Companies You Should Know

The European Commission awarded its sovereign-cloud procurement (€180m over 3 years) to 4 consortia. Look at some of these names:

Post Telecom (Luxembourg’s incumbent telco) leading with CleverCloud and OVHcloud, the two big French independents.

StackIT (Schwarz Group’s German enterprise cloud, owned by the same family that owns Lidl, see recent Cohere/Aleph Alpha tie-up).

Scaleway (Iliad’s French cloud, the Niel ecosystem).

Proximus (Belgian incumbent telco) leading with S3NS (the Thales/Google JV that runs Google Cloud workloads under French sovereign control), Clarence (Belgian cloud), and Mistral. Yes, the AI lab is in the consortium.

Interesting that AT&T and T-Mobile aren’t in the AI game in the US. And here they are at the very centre isn’t it? Lobbying is a hell of a drug.

Also, Mistral on a sovereign-cloud cap table is another signal that they did a hell of a job hiring Government Affairs people early. Issue #12 covered the Cohere/Aleph Alpha merger as the warning shot for European AI consolidation. This is what comes next, the European sub-frontier model gets bundled into the sovereign procurement. The Commission used €180m to put European cloud providers on production-grade public-sector contracts at scale.

The other interesting bit is who didn’t win: T-Systems, Telefónica, the various BT and Vodafone bids. The list is short and aggressively European.

But you read those names, and are you bullish on the EU AI ecosystem?

Are you balls.

3. Fractile Raised $220m, Long Known, But Look At The Company It Is Keeping

The round that everyone knew about for the last 6 months, UK inference chip startup Fractile closed a $220m Series B on 13 May, co-led by Accel, Factorial Funds, and Founders Fund. First commercial chip 2027. Anthropic putting in orders apparently.

Fractile is the in-memory compute play. I;ve written about this lots and lots. But for those not listening at the back, calculations happen inside the memory itself rather than shuttling data back and forth to a logic die. Same architectural family as Synthara from the interview series and the wider in-memory cohort I tracked in Issue #8. The memory-wall thesis from Issue #10’s Photonic Foundry Fallacy says this is where the next compute bottleneck breaks. Think memory, not logic. I’m reminded of Project Zeus.

“Why can’t marketing be an arm of sales?”

Fractile was in Issue #1 at £100m, so the $220m headline is the SotF readership’s “long known” candidate. The interesting bit is the company it’s now keeping. Same week, Dutch startup Euclyd (backed by the former ASML CEO) is openly fundraising at €100m+, claiming 100x inference power efficiency over Nvidia’s Vera Rubin. Cerebras raised $1bn in February and signed a $20bn / 750MW compute deal with OpenAI. Nvidia bought Groq for $20bn in December. Pricing compute in MWs again folks.

The Nvidia rivals have collectively raised something like $5.5bn this year. The open question is whether any of them ships at scale before the H300 successor lands. Fractile says 2027. Tight.

Dealroom had European AI chip startups at $800m and US ones at $4.7bn as of mid-April. Fractile's $220m takes the European total past $1bn. The gap is still real, and the European inference cohort is finally large enough to count as a category.

4. The Next UX For AI Doesn’t Have A Browser

And finally, UX.

Google shut Project Mariner on 4 May. That was a bet on the visual-screenshot UX paradigm where AI clicks buttons like a human and humans watch through the browser. It lost.

What beat it is API plus CLI. Claude Code on the terminal. OpenAI’s Operator behind an API surface. Anthropic’s Quick Mode for Claude in Chrome, which bypasses the tool-use protocol entirely and uses single-letter commands instead of JSON, 3x faster, 4-minute browsing tasks done in under 2. All very 3 minute abs. The fastest agent runtime is the one that doesn’t pretend to be a person with a mouse.

This one was a simple one of money. A vision agent pays for screenshot tokens, DOM serialisation, and round-trip latency per click. A CLI call costs essentially nothing per operation. Frontier models get better at structured reasoning faster than they get cheaper at vision; and so gap widens. Quick Mode’s 3x is an early data point on a curve that keeps moving. Every “agent browser” product pitch from the last 12 months (Comet, Atlas, Mariner itself) was a fight versus physics.

The mouse and the browser are a translation layer for eyes. Agents don’t need eyes. The terminal is the agent-native runtime because it was already designed for programs talking to programs with humans peering in. Claude Code’s traction is the proof: developers recognised this immediately. Every agent browser is reinventing bash, badly, at 10x the cost.

One qualification. The Mariner type products were quite betting on vision. They were also betting that the browser is the universal abstraction over legacy software that lacks APIs. That’s partially wrong (most valuable workflows have APIs or will) and partially still alive (the long tail of internal enterprise tools, government systems, weird SaaS). Vision agents likely survive as a fallback for the messy 15%.

I think the interesting insight here is CLI/API UX puts pressure on every SaaS company to expose a real API or lose to a competitor that does. Agent-readability becomes a moat or a death. In a world where agent-to-API traffic dwarfs human-to-screen traffic, the infrastructure layer (compute, interconnect, the data centres carrying all the traffic) is where the money ends up (pending diligence).

Also Worth Your Time

My friend Tom Walton Pocock published the UK IP Amnesty Act on Wednesday with two proposals I strongly support.

A 30-day Universal IP Exit forcing UK universities to license out research IP on a standard 10% ordinary-equity term, fully dilutable, no royalties or milestones, with the IP reverting if the founder doesn’t raise £1m within 12 months.

A UK IP Register modelled on the Land Registry, mandatory at patent issuance or transfer. Both proposals fix the exact bottleneck Fractile and every UK deep-tech spinout in the Cloudberry pipeline has had to claw through to escape the university. UK research output is world-class (1st on WIPO’s H-index, 3rd on highly-cited papers globally). The commercialisation pipeline is the gap. Walpo names the constraint and proposes specific surgery.

Read it. and share with Wes Streeting for the next Kings Speech plz.

Thanks for reading as blood usual, do a couple of likes so I can give up the VC game and do this full time would you?